- 82 Posts

- 44 Comments

11·3 days ago

11·3 days agoThose might just be LoRA merged models, not full fine-tuning. From what I heard, fine-tuning doesn’t work because the models are distilled. You’d have to find a way to undistill them to train them.

11·3 days ago

11·3 days agoLast I heard, LoRAs cause catastrophic forgetting in the model, and full fine-tuning doesn’t really work.

91·3 days ago

91·3 days agoYou can never learn anything with these clickbait headlines.

21·3 days ago

21·3 days agoI don’t think so. They’re going to have to do a lot better than a tutorial to win people back. That said, the two Flux models being distilled making them close to impossible to fine-tune sucks too.

137·7 days ago

137·7 days agoOr just not show people what you’re typing.

7·17 days ago

7·17 days agoI can’t tell if this is a joke or not.

3·19 days ago

3·19 days agoThe way you described is already how Civitai works. Maybe it’s to keep the moderation of the two sites cleanly separated. This way the team on green can do what ever they want, on green.

4·19 days ago

4·19 days agoA computer like that is useful outside of work. I’d pay for it out of pocket if I had to.

2·21 days ago

2·21 days agoYou keep moving the goal posts and putting words in my mouth. I never said you can do new things out of nothing. Nothing I mentioned is approaching, equaling, or exceeding the effort of training a model.

You haven’t answered a single one of my questions, and you are not arguing in good faith. We’re done here. I can’t say it’s been a pleasure.

1·21 days ago

1·21 days agoDo you have any examples of how they fail? There are plenty of ways to explain new concepts to models.

https://arxiv.org/abs/2404.19427 https://arxiv.org/abs/2406.11643 https://arxiv.org/abs/2403.12962 https://arxiv.org/abs/2404.06425 https://arxiv.org/abs/2403.18922 https://arxiv.org/abs/2406.01300

4·22 days ago

4·22 days agoWhat kind of creativity are you talking about then? I’ve also never heard of a bloated model. Which models are bloated?

6·22 days ago

6·22 days agoBut at what point does that guidance just become the dataset you removed from the training data?

The whole point is that it didn’t know the concepts beforehand, and no it doesn’t become the dataset. Observations made of the training data are added to the model’s weights after training, the dataset is never relevant again as the model’s weights are locked in.

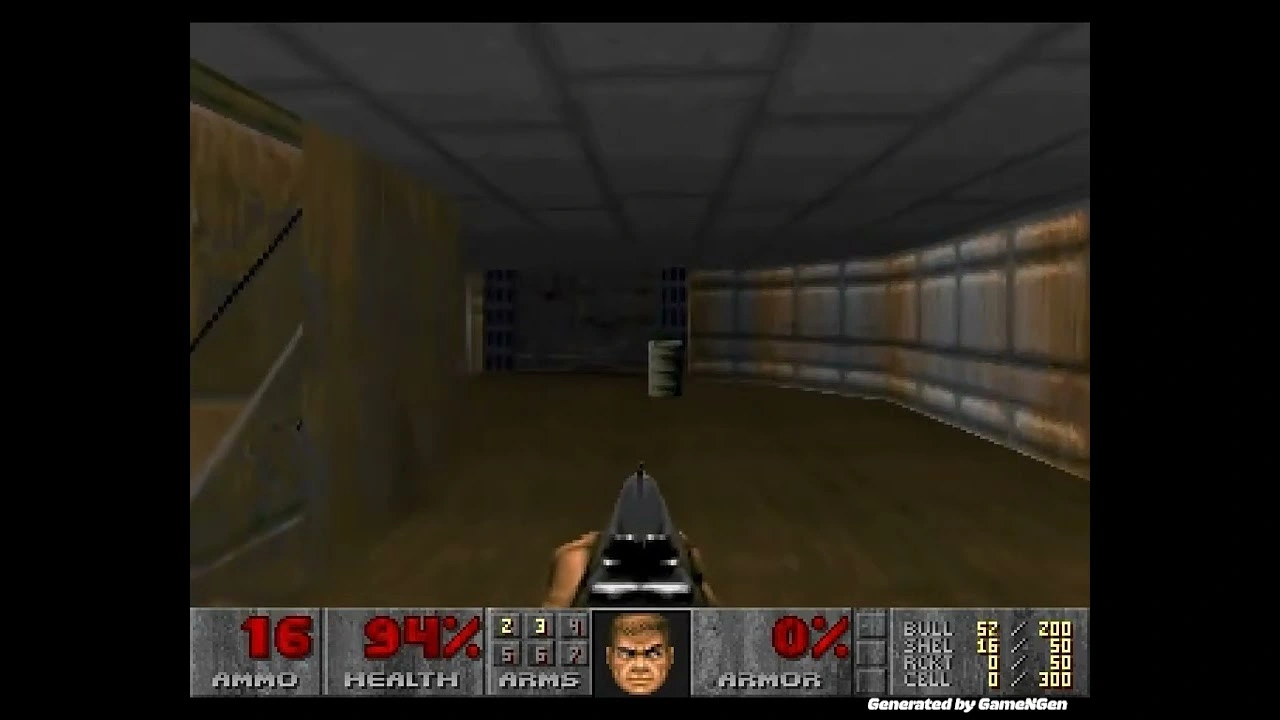

To get it to run Doom, they used Doom.

To realize a new genre, you’ll “just” have to make that game the old fashion way, first.

Or you could train a more general model. These things happen in steps, research is a process.

6·22 days ago

6·22 days agoThere are more forms of guidance than just raw words. Just off the top of my head, there’s inpainting, outpainting, controlnets, prompt editing, and embeddings. The researchers who pulled this off definitely didn’t do it with text prompts.

8·22 days ago

8·22 days agoI mean, you’ve never seen a purple elephant with a tennis racket. None of that exists in the data set since elephants are neither purple nor tennis players. Exposure to all the individual elements allows for generation of concepts outside the existing data, even though they don’t exit in reality or in the data set.

29·23 days ago

29·23 days agoIf you’re on Windows, you should return it before it’s too late. It isn’t going to get better.

3·24 days ago

3·24 days agoYeah, I didn’t notice the login since I was signed in.

4·24 days ago

4·24 days agoOh, that makes sense.

2·24 days ago

2·24 days agoI don’t really know myself. I assumed it would install and update stuff for you.

That was really cool.